One of the more interesting parts of CG Rendering is looking back at the previous generations of hardware and drilling down into designs based on technical limitations.

Most, if not all, of these bottlenecks have long since been relegated to the past, and while there are a handful of exceptions (usually tied to very low resolution screens) there can be a lot learned by attempting to visualize the worlds on modern software+hardware.

There’ll be a bit of tech talk, so here’s a quick run-down on what the terms mean in Normal English:

Albedo/Diffuse; the base colour of something. Think of what you’d see a surface as, under direct, perpendicular white light. Affected by light position, NOT view position.

Roughness/Smoothness; pretty self-explanatory. Typically a scale of 0-1 for Smoothness.

Specular Map; the colour of the reflected highlight. Affected by the view position and light position (it’s light that bounces from the source, off the surface, to the eye).

Metallic Map; a scale in 0-1 of how metallic an object is, again pretty self-explanatory. HOWEVER, does get funny with non-metallic but conductive materials….

Early 7th-Gen to Late 8th-Gen: Moving from Blinn-Phong to PBR.

This is the latest ‘big fundamental change’ that has moved nearly everyone to a different asset pipeline. Not counting realtime ray-tracing (yet….), it represents possibly the biggest, yet often least understood shift in graphics development of the 2010’s, ahead of tessellation and better instancing.

The Souls games show probably the biggest change here. In going from the old-school rendering of Dark Souls 1 to the PBR Dark Souls 2, players noticed a huge improvement in environment quality and realism. Majula, DS2’s hub area, is a masterclass in PBR asset creation. Everything from the ground textures, with dirt>mud>puddles all being properly handled, to the use of metallic masks for rusting metal and paint (non-conductive layers over conductive steel), to the transmission of light through fabric on ruined dwellings (light passing through cloth is deducted from light being reflected).

Having said that, there are some baffling decisions; Havel’s and Siegmeyer’s sets managed to become metallic (from stone?) and completely 100% dull (from iron?) respectively in the move.

So, one of the strange questions is: if you have properly authored Diffuse/Roughness/Spec textures, couldn’t you get the same results as a Albedo/Smoothness/Metallic, just by using slightly different lighting math?

The answer is…yes!

In fact, many rendering systems build an internal spec map from the Albedo and Metallic tectures. It’s one of the reasons why support for old-style specular maps has been so common, fast, and good; effectively it’s already there. The other reason is that we still have all those old assets and don’t want to waste them.

Having said that, the rendering calculations are so different to the old Blinn-Phong systems that it’s considered a whole New Thing. And it does encourage a better, more accurate asset creation pipeline.

Early/Mid 6th-Gen to Late 8th-Gen: Moving to a Pixel-based approach.

The biggest change going from 6th- to 8th-gen is the move from vertex lighting or forward, to advanced forms of per-pixel lighting, such as forward+ or deferred.

This means that you’re generating, adding and using much more information with each pixel before it reaches the screen, you’re combining things in the right order (ambient occlusion should only affect ambient light, not make all corners pitch black in broad daylight). It gives a more natural output, an image that is easier and nicer to look at, as well as helping nail the realism and being more believable.

Vegetation is one of the things that just isn’t going to make the cut. What might have worked at 360 or 480i simply isn’t going to cut the mustard at 1080p with modern lighting. The meshes are so much more defined, with better support for bending/deformation (more polys for a better curve) and higher-res textures for foliage detail and masks. In the examples below, I’ve replaced the original vegetation with free assets from the UAS, and they use my own custom shader.

As the HDRP doesn’t support Terrain Grass by default (as of 2018.3, anyway) I used the new GPU-powered VFX system to generate it. Based on the supplied VFX grass example, I modified it to use terrain height (from heightmap) and ground colour (from splatmap) to spawn grass particles that get pushed around by the player and wave in the wind.

The tree shader adds in a vertex animation system that probably should be standard by now. It relies on 3 masks, and 3 variables. In the image, the red channel is for handling the trunk, from base (0) to tip (1). Green handles the branch, from trunk (0) to tip (1), and blue does the same for the leafs. This results in the reddish-yellow-looking mask that defines how the tree reacts to wind. Combined with a bending coefficient (or stiffness, take your pick) and a wind input, you can calculate how each part of the tree reacts to gusts, breezes, and strong winds.

Even basic systems like terrains were barely utilized back in those times. Things like texture-blended terrain materials were just too expensive if you were using even average-res textures, so a common practice was ’tiled mosaics’ of terrain quads, with pre-prepared blends. Nowadays, to have 8 (or more) terrain textures blend nicely together is basically nothing, even using more advanced height- and slope-based transitions.

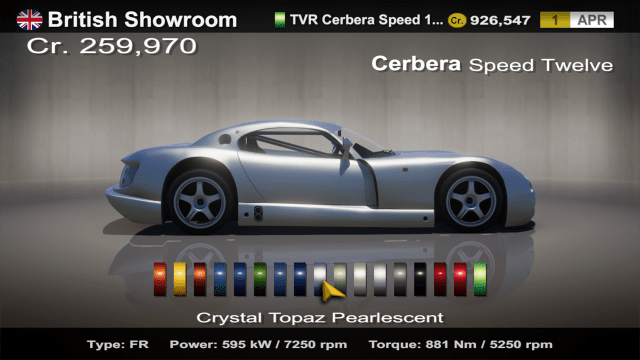

The transition to a more logical, reasoned approach does have some drawbacks though. Some simple ‘hack-style’ tricks that could give good results at a low performance cost now won’t work. One of the best examples is a super-simple car showroom, like the one in GT4 (NOT pictured above).

In previous generations, the reflection and shadow on the ground would be handled by much simpler shaders and techniques, but with the improvements in consolidation with regards to lighting engines, it becomes a bit more complicated. The direction-dependent soft shadowing around the car, planar reflection and static background (think screen-space UVs) mean that you need a “physically plausible” solution that can handle all those things at once with a lighting engine you can’t mess with.

This highlights one of the strange ways a more modern system can be a step backwards. By making everything work properly, it makes it much harder to build the specific non-realistic or stylized scenes, especially when you want to mix in photo-real aspects.

*note that this was rendered before the release of the Measured Material Library, and its own car paint shaders*

Mobile to Late 7th-Gen: Moving the mountain to Mohammed?

Ok, what can we do with mobile assets? Mobile hardware limitations mean that poly count is on the low side, and texture size is often very, very low. Since advanced lighting often isn’t an option due to lack of grunt and the need to keep battery use and temp down, normal maps aren’t massively common (and considering the screen size, you might not notice them anyway). Oh, and several ‘normal’ level post-processing effects (SSAO, typically) aren’t fast enough on mobile so they don’t come ready to use dynamic effects. And since their tiny GPUs hate fancy shaders, it won’t have any materials set up, nor any masks/maps needed for them.

So, if basically everything is off the table, what can you put back on it? Hero assets; things prominently on-screen for a long period of time, are typically much better than the normal assets. And since readability is such a high priority with the small screens, the designs and textures might be a bit more crisp than expected. This is going to be more effective if the assets are mostly large solid colours, or hard-surface (non-organic).

..

Environment art, unfortunately, isn’t going to be usable. Not only is the vegetation far too simplistic and lacking in detail, but the terrain, structures, and far off meshes (if there even are any) are not suitable for close examination. In these cases, I’ve substituted them for an underground cave pack, available on the UAS, as well as my own custom shaders.

In addition, volumetrics have been added to soften the background environment and differentiate it from the style of the characters and creatures. By adjusting a handful of settings, the harshness of the lighting can be tailored to suit the location, era, and style.

FX especially isn’t suitable. Not that there typically is much (particle systems have something of an ‘upfront cost’ as they need to generate particle meshes), but what little there is really isn’t made to be more than a centimeter or two across.

…

9th-Gen and into the Future: Gran Turismo on the PS5 and Unity + RTX

Well, it looks like ray-tracing is finally around the corner, about to be released Soon ™.

This is one of the many technical presentations by Polyphony during 2018, showcasing the very latest in rendering tech. As you can see from the demo of the Jag E-Type and McLaren P1 GTR (starting about 7:30 in) the value of raytraced lighting is pretty obvious.

The following presentation, about the IRIS rendering system at Polyphony Digital, can be downloaded here:

However, it’s in Japanese! I translated the important parts into Normal English for the official Gran Turismo board “GTPlanet“. To view the translation, click the image below to be linked to the GTPlanet Forum post. Read along, they’re using some pretty neat tricks, and while some of the parts are likely for future use, quite a fair bit is applicable to modern game rendering.

Polyphony/SONY is not alone in bringing raytracing to games. Unity recently released an experimental build of their High-Definition Render Pipeline (HDRP) that uses the nVidia ray-tracing tech. Check out the blog page here.

This is arguably more interesting now than ever, as the recent driver updates to 10-series nVidia GPU cards has unlocked the ability to do some ray-tracing. Typically adding support for old hardware is a step back, but by making the tech available to a wider audience (all 10-series owners, not just 16- and 20-series owners) it might give developers and users a taste for the new lighting, and some vital experience in these early days.

It’ll certainly be interesting to see where this tech goes in the future. Particularly on the hardware side, if the manufacturers go down the specialty chip line, and how it fits into the current GPU architecture. Currently, there’s a wide range of variables for each chip, the speed, core count, available memory, limits on bandwidth, etc. Will this just become one more thing to worry about having some weird number, or will it be like the memory value that seems to have conformed at the 4GB, 8GB (and such) levels for generations?

There’s plenty of room to get it wrong too – will a console launch with significantly less ray-tracing ability, and be left in the dust? Or will one launch with too much and games will have to try and dump as much workload on them for little effective gain? Could a Pro version of a console have extra ray-tracing ability as its party piece and justification?

Note that all these projects are by their nature transformative, being shown for educational and informative purposes, and pose no direct conflict with an existing product, nor, to the best of my knowledge, any upcoming product or release. No monetization nor commercialization of any kind is tied to these images, and source assets and / or project files will not be released. Thus, these works fall under Fair Use.

If you disagree with the above statement, know that you have the option to contact me, and I am open to answering questions and addressing concerns.